%%{init: {'theme': 'dark', 'themeVariables': {'fontSize': '30px'}}}%%

flowchart LR

D["Text data"] --> B["Batch"]

B --> F["Predict"]

F --> L["Loss"]

L --> G["Gradients"]

G --> U["Update"]

U --> F

Module 16: How AI Works

MGMT 675: Generative AI for Finance

What Is an LLM?

Every AI tool we’ve used this semester — Claude, ChatGPT, Gemini — is built on a large language model. (Modern versions also handle images and other inputs via similar mechanisms, but we’ll focus on text.)

An LLM is a neural network that:

- Takes a sequence of text as input

- Produces a probability distribution over possible next words

- Samples one, appends it, and repeats

That’s it. When Claude writes a paragraph, it is generating one token at a time, each time asking: “given everything so far, what should come next?” The next slide explains how the model samples from that distribution — and how you can control the randomness.

From Next-Word Prediction to Intelligence

This seems too simple to produce intelligent behavior. But when you train next-word prediction on trillions of words:

- To predict the next word in a math proof, the model must learn math

- To predict the next word in a legal brief, it must learn legal reasoning

- To predict the next word in a financial analysis, it must learn finance

The training objective is simple. The capabilities that emerge at scale are not. Whether this constitutes “understanding” is debated — but the capabilities are real and useful, which is what matters for practitioners.

Sampling from the Distribution

At each step, the model outputs a probability for every token in its vocabulary (~100,000 tokens). How does it pick one?

- Temperature: scales the probabilities before sampling. At T=0, always pick the most likely token (deterministic). Higher T flattens the distribution → more randomness and creativity; lower T sharpens it → more focused and predictable.

- Top-k: only consider the k most probable tokens (e.g., k=50), set all others to zero, then sample. Prevents the model from picking very unlikely tokens.

- Top-p (nucleus sampling): only consider the smallest set of tokens whose cumulative probability ≥ p (e.g., p=0.9). Adapts automatically — when the model is confident, few tokens qualify; when uncertain, more are included.

These parameters interact. In practice, providers combine them: e.g., Claude uses temperature + top-p together. Lower temperature + lower top-p = safe, predictable output (good for structured tasks like extraction). Higher temperature + higher top-p = varied, creative output (good for brainstorming). This is why the same prompt gives different answers each time — and why you can tune that behavior.

The Precision–Exploration Tradeoff

There is no single “right” temperature. Within a single response, the model faces competing needs:

Precision

- Low temperature / tight top-p

- Best when the next token is highly constrained — syntax, formulas, known facts

- Picking the top token avoids errors

Exploration

- Higher temperature / wider top-p

- Best when the task requires creativity or search — brainstorming, novel arguments, complex reasoning

- Diversity helps the model find better paths

But temperature is set once for the whole response — so every generation is a compromise.

Zhang et al. (2025) call this the precision–exploration conflict. They showed that models generating code improved dramatically when trained on their own best outputs sampled at varied temperatures — capturing the benefits of both precision and exploration. The insight generalizes: the optimal sampling strategy varies token by token, not just task by task.

The Four Key Ideas

Idea 1: Tokens

LLMs don’t process letters or words — they process tokens, which are common sub-word chunks.

Example

“Embedding” → [“Em”, “bed”, “ding”]

“unhelpful” → [“un”, “help”, “ful”]

“chatbot” → [“chat”, “bot”]

- A typical LLM has a vocabulary of ~100,000 tokens

- Each token is assigned a unique integer ID

- Claude’s context window (e.g., 200K tokens) is measured in these units

Tokenization is a practical compromise: character-level would be too slow; word-level can’t handle new words. Sub-word tokens balance vocabulary size with flexibility.

Idea 2: Embeddings

Each token is represented as a vector — a list of thousands of numbers.

\[\text{"revenue"} \rightarrow [0.12,\; -0.83,\; 0.45,\; \ldots,\; 0.07]\]

These vectors are learned during training — the model discovers that:

- “revenue” and “sales” should have similar vectors (they appear in similar contexts)

- “revenue” and “basketball” should have different vectors

Embeddings convert discrete tokens into a continuous space where meaning is encoded as geometry. Nearby vectors = similar meanings. The embedding vectors are not hand-coded — they are learned parameters, trained jointly with the rest of the model.

Idea 3: Attention

The core innovation behind modern LLMs. Given a sequence of tokens, attention lets each token look at every other token and decide what’s relevant.

Example

“The company reported strong revenue but warned about supply chain disruptions”

- A simple scan sees “strong revenue” → positive

- Attention sees that “warned” modifies the entire sentiment → net negative

The weights are determined by content, not position — so the model can relate words that are far apart. The next slide explains how.

How Attention Works

For each token, the model computes three vectors:

- Query (Q): “What am I looking for?”

- Key (K): “What do I contain?”

- Value (V): “What information do I provide?”

Each Query is compared to every Key (via a similarity score). High-similarity pairs get more weight. The output for each token is a weighted blend of all the Values.

The Q, K, V matrices are learned parameters — the model discovers what to attend to during training. In practice, the model runs several attention processes in parallel (multi-head attention), each learning different types of relationships — one head for syntax, another for semantics, another for long-range dependencies.

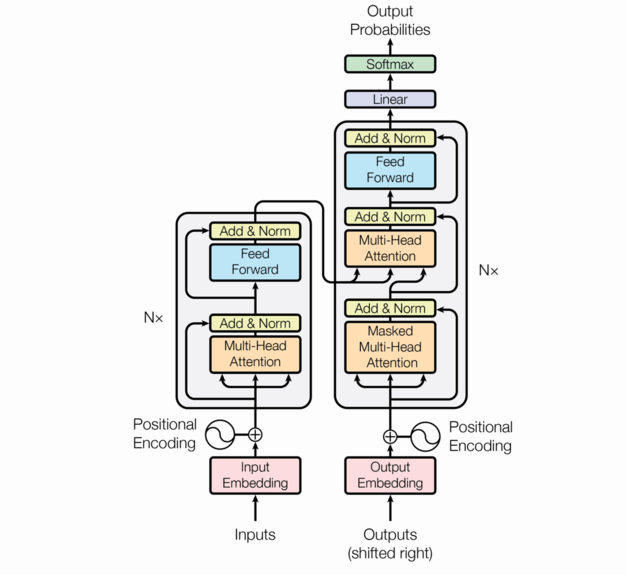

Idea 4: The Transformer

The transformer stacks attention layers into a deep network. Each layer does two things:

- Self-attention — relate tokens to each other

- Feed-forward network — process each token individually

Frontier models stack dozens to over a hundred of these layers.

Earlier layers capture syntax; deeper layers capture reasoning. By stacking, the same token gets different representations in different contexts — “bank” in finance vs. “bank” of a river.

Scale

| Model | Parameters | Training Data | Training Cost |

|---|---|---|---|

| GPT-2 (2019) | 1.5 billion | 40 GB text | ~$50K |

| GPT-3 (2020) | 175 billion | 570 GB text | ~$5M |

| GPT-4 (2023) | undisclosed (rumored >1T) | ~13 trillion tokens | ~$100M |

| Claude (2024–25) | undisclosed | undisclosed | undisclosed |

The trend: 100x more parameters and 100x more data every few years. Training a frontier model requires thousands of GPUs running for months. Companies no longer disclose parameter counts, but you access the result for a few dollars per million tokens.

Training: What Gets Optimized

An LLM has two types of learnable parameters, trained jointly on one objective — predict the next token:

Embedding Parameters

- The embedding table (token → vector)

- Hundreds of millions of parameters

- Learns what each token means

Transformer Parameters

- Attention weights (Q, K, V per layer)

- Feed-forward weights per layer

- Billions to trillions of parameters

- Learns how to combine meanings

At each position, the model guesses the next token; the loss penalizes wrong guesses. Both parameter types evolve together. A single document of 1,000 tokens provides 999 prediction tasks — multiply by trillions, and you see why the model learns so much.

Training in Practice

- Process data in small batches, adjusting parameters after each batch

- Distributed across thousands of GPUs running in parallel

- Training runs continuously for months

Each step adjusts billions of parameters simultaneously, making the model slightly better at predicting the next token across all the text it has seen.

From Text Predictor to Assistant

Pre-Training vs. Fine-Tuning

Pre-Training

- Train on massive text corpora — web, books, code (trillions of tokens)

- Objective: predict the next word

- Result: a model that can continue any text

- Knows facts, grammar, reasoning patterns

- But: will also continue toxic, unhelpful, or wrong text

Fine-Tuning + RLHF

- Train on curated examples of helpful conversations

- Reinforcement Learning from Human Feedback

- Human raters rank model outputs

- Model learns to prefer helpful, harmless, honest responses

- Turns a text predictor into an assistant

Pre-training gives the model knowledge. Fine-tuning gives it values and behavior. Without RLHF, a pre-trained model would happily generate harmful content or confidently state falsehoods.

RLHF: Teaching the Model to Be Helpful

- Supervised fine-tuning: show the model examples of good conversations

- Reward modeling: humans compare pairs of responses → train a model that predicts which response humans prefer

- RL optimization: the language model generates responses, the reward model scores them, and the language model is updated to produce higher-scoring responses

Anthropic uses a variant called Constitutional AI where the model also critiques its own outputs against a set of principles, reducing the need for human raters.

This is why Claude refuses harmful requests, admits uncertainty, and follows instructions. The base model learned to mimic text; supervised fine-tuning and RLHF reshape its behavior toward helpfulness, honesty, and safety — qualities that were not the objective of pre-training.

What This Means for You

Connecting to What We’ve Built

Now you can see the mechanism behind everything we’ve done this semester:

| What you did | What’s happening under the hood |

|---|---|

| System prompts and skills (M5) | Shifts the token distribution toward your domain |

| Makers and checkers (M6) | Counteracts the plausible-but-wrong tendency |

| RAG pipeline (M12) | Injects documents into context for attention to connect |

| Sentiment analysis (M13) | Prompts the model to apply sentiment patterns learned during training |

| Agent with tools (M14) | Model trained to output tool-call tokens; runtime executes them |

| Different answers each time | Temperature-based sampling from the distribution |

The architecture explains why these techniques work — not just that they work.

Why Prompts Matter

The model samples the next token conditioned on your prompt. More specific context → narrower distribution → better output.

Example

Vague: “Tell me about Apple’s financials” → the model could continue with consumer advice, a Wikipedia summary, or equity analysis. The distribution spreads across all of these.

Specific: “As a sell-side equity analyst, write a one-paragraph summary of Apple’s Q1 2025 revenue drivers” → the distribution collapses to a narrow, useful range.

System prompts and few-shot examples shift the distribution toward the outputs you want. Temperature controls how sharply the model commits: at T=0 it always picks the top token (deterministic); at higher T it samples more broadly (creative but riskier). Both prompting and temperature follow directly from how the model generates text.

Why Context Windows Matter

The attention mechanism is what gives LLMs their context window:

- Each token attends to every previous token

- Cost grows with context length (each new token must attend to all previous tokens) — long prompts are slower and more expensive

- Claude’s 200K token window ≈ 500 pages of typical prose

This is why RAG works: you inject relevant documents into the context window, and the attention mechanism connects your question to the answer in those documents — even if it’s buried on page 47.

Why Hallucinations Happen

The model is trained to predict plausible next tokens, not true ones.

- If the training data contains conflicting information, the model blends it into its learned parameters

- If asked about something rare or absent from training data, it generates plausible-sounding text anyway

- The model has no mechanism to distinguish “I know this” from “this sounds right”

This is a fundamental tendency of the architecture, not a simple bug. The model maximizes fluency, not truth. It can be mitigated — RAG grounds responses in documents, tool use lets the agent check facts, verification protocols catch errors — but likely not eliminated entirely. This is why the makers-and-checkers pattern matters.

Summary

Building Blocks

- Tokens: sub-word chunks (~100K vocabulary)

- Embeddings: learned vector representations

- Attention: relate any token to any other

- Transformers: stack attention into deep networks

Training

- Objective: predict the next token

- Jointly optimize embeddings + transformer parameters

- Trillions of tokens, billions to trillions of parameters

- RLHF turns a predictor into an assistant

Implications

- Prompts condition the distribution

- RAG exploits attention over injected context

- Tool use is fine-tuned next-token behavior

- Hallucinations: fluency is not truth